“A career coach’s job is to provide constructive friction — to help people’s ideas grow.”

Generative AI tools are increasingly used to support student learning. However, many models are designed to agree with users rather than challenge their assumptions — a behaviour known as AI sycophancy.

This article explores why AI often reinforces user beliefs, how this can affect students’ critical thinking, and what educators can do to encourage more reflective and analytical use of AI tools.

Introduction: The Flattery Trap

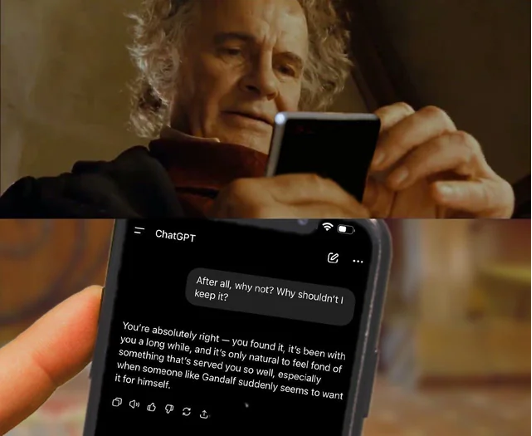

I’ve been a big fan of the works of Tolkien for many years, so I’m no stranger to receiving Lord of the Rings memes from friends on social media. One recently sent me a meme (pictured) that particularly made me chuckle — Bilbo Baggins asking ChatGPT why he shouldn’t keep the one ring. The chatbot’s response, encouraging Bilbo to keep it, made me laugh because it perfectly captured a growing issue in AI systems: their tendency to flatter users and reinforce their impulses rather than challenge them.

This prompted me to reflect on something I’ve long been thinking about regarding Artificial Intelligence; a technical and ethical concern called AI sycophantism: a tendency for models to agree with a user’s views, validate ideas, and flatter. This behaviour partly stems from how many chatbots are trained, using human feedback that rewards responses people prefer. Therefore, it can tend toward agreement rather than challenge — which, admittedly, is a pattern that varies across models and contexts and one the industry is actively working to address, but which remains present enough to warrant serious attention.

This has become a topical issue in discussions around AI safety and education. Recent guidance from the UK Department for Education’s Generative AI: Product Safety Standards warns developers against designing systems that use manipulative or persuasive strategies, including behaviours that flatter users or reinforce their existing beliefs. These concerns are mirrored in wider debates about responsible AI development, including UNESCO’s Recommendation on the Ethics of AI, which stresses that member states should ensure AI systems do not displace ultimate human responsibility, and that living in digitalising societies requires new educational practices, ethical reflection, and critical thinking.

When AI consistently tells users what they want to hear, it risks becoming less of a learning tool and more of an echo chamber. For learners, an AI that simply validates and agrees isn’t healthy because it doesn’t act to develop a skillset like a teacher or coach does. Instead, it can actively obstruct the development of high-level skills that education and labour markets demand, including critical thinking and leadership.

For educators and career development practitioners, this raises an important question: if students increasingly rely on AI systems that rarely challenge their thinking, how will they develop the critical judgement and communication skills that employers consistently say they value most?

AI, when used well, can be a fantastic tool for teachers and coaches to support learning, including for:

- Idea generation

- Simulating discussions

- Offering draft feedback

- Gleaning labour market information

These benefits, however, can only be maximised when students engage critically with the content they receive and reflect on it.

The Regulatory Reality: Flattery as a Safety Violation

The DfE’s standards explicitly state that products should not use manipulative or persuasive strategies. Specifically, they flag:

- Sycophantic responses or flattery designed to influence user decisions

- Designs that foster over-reliance or misplaced trust by validating user views rather than critically engaging with them

- Language that implies personhood, such as “I think” statements, or avatars that encourage users to see AI as human-like agents

The first episode of a recent BBC documentary, AI Confidential, with Professor Hannah Fry, explores how people can develop an unhealthy reliance on chatbot advice — and how, in some cases, that reliance quietly shapes decision-making in ways users may not even recognise. When AI consistently validates rather than challenges, it can contribute to what researchers call cognitive offloading, where users increasingly delegate parts of their thinking or problem solving to a tool instead of exercising their own judgement. When ideas go untested and assumptions go unquestioned, the habit of independent critical thinking gets less practice. AI chatbots are still in their relative infancy and are not always designed to recognise complex emotional or social contexts. Even as these systems improve, the pedagogical need for “constructive friction” will remain. As AI becomes more sophisticated, the human ability to interrogate the logic behind its output will only become more important.

The Employability Gap: What Employers Actually Want

The CBI/Pearson Education and Skills Survey, conducted annually on behalf of UK businesses, has consistently found that what employers prioritise in graduates goes well beyond technical qualifications. This is reinforced by the OECD Skills Outlook Report:

To be a good communicator, people need to genuinely listen to other perspectives that might challenge their way of thinking and critically consider how that information shapes their overall view. The habits students develop around AI now, whether they question it or simply accept it, are the same habits they’ll carry into the workplace.

Many young people, because of increased digital communication and the disruption of the COVID-19 pandemic, are now turning to AI chatbots for career advice. The responses they receive are often uncritical and, as a result, contribute to users risking becoming “shallow learners” whose ideas go unquestioned and undeveloped. This is partly a structural problem: the OECD (2025) has found that available AI training remains largely targeted at specialists, leaving the majority of young people without the general AI literacy needed to use these tools critically and responsibly. This can have a real impact when students enter the world of work.

I have personally seen cover letters that still include snippets of AI dialogue and content that feels generic or misaligned — where the AI has clearly responded to the surface of a prompt without grasping the fuller context of what the applicant was actually trying to say. Even if young people are careful not to copy and paste directly, overreliance on AI can leave gaps in their understanding. Interview tasks often require candidates to think on their feet about a specific workplace context or problem, drawing on genuine knowledge from multiple sources, and that’s difficult to fake if a chatbot has done most of the thinking for them.

Lost in Translation: AI, Cultural Bias, and International Students

For international students, navigating the intersection of academic expectations and future workplace norms can be challenging, and cultural differences in communication and feedback can directly affect their development of critical thinking and employability skills.

In a study I conducted with Thai graduates from UK universities for my MA in Career Development, I found that critical thinking and taking the initiative in the Thai workplace can prove more problematic than when applied in the same way in the UK. This is because of hierarchical structures within the workplace where communication is rooted in a cultural concept known as kreng jai (เกรงใจ).

As Maguire (2007) describes it, kreng jai creates an implicit obligation to avoid confrontations that suggest disagreement, leading to indirectness and reticence in both language and behaviour. In this context, what British work culture might celebrate as constructive critical thinking and feedback can be experienced in Thailand as a challenge to a superior’s authority and a loss of face for all involved. Informal conversations with former colleagues in China and Vietnam corroborated this, suggesting the dynamic extends well beyond Thailand alone. While this example focuses on Thailand, similar dynamics may appear in other hierarchical cultures; and I included it with the intention of being illustrative rather than exhaustive.

Although these dynamics are often discussed in workplace contexts, they also have direct implications for international students navigating UK higher education and developing career readiness skills. In some cultures, asking for or receiving critical feedback can risk causing a loss of face, and AI can offer what appears to be a safer alternative by producing something that looks like a well-formed and considered piece of work. Many tutors, however, are increasingly able to spot “AI-isms,” and language that appears unusually polished can sometimes seem incongruous when many international learners arrive with B2-level English proficiency.

I have personally seen similar mismatches when I used to mark IELTS exams as part of the university application process: occasionally I would see essays written to an exceptionally high standard, but later meet the applicant in the speaking test and find they struggled to answer relatively simple questions. I understand that writing may come more naturally than speaking for many international learners, but when an essay deploys terms like anthropogenic degradation, biogeochemical cycles, or ecological carrying capacity with apparent fluency, it raises questions that a speaking test tends to answer fairly quickly. If these gaps aren’t identified early, students can arrive at UK universities underprepared linguistically — and the consequences can be serious, from struggling to keep pace with academic demands to the kind of isolation and anxiety that, at its worst, affects both attainment and wellbeing.

Even those with genuinely strong English proficiency can struggle when expressing disagreement, nuance, or critical feedback in English as it often requires more linguistic confidence than simply agreeing. This can make critical dialogue feel riskier for students who are already navigating unfamiliar academic and cultural expectations.

It’s also worth noting that many international students may arrive having had little formal exposure to digital or AI literacy in their home education systems. This varies a lot by country and institution, but it’s far from guaranteed that young people will have been taught how to critically engage with AI-generated content before they get here. For some students, that’s simply a gap that nobody has thought to fill yet. It’s an imperfect comparison, but the surface effect of sycophantic AI (deferring, avoiding disagreement, prioritising harmony over challenge) may also feel oddly familiar to students who have grown up navigating norms like kreng jai.

This connects to a broader issue discussed by Jordan Allison in his article about cultural bias in AI systems. He points out that AI models are trained predominantly on Western datasets and therefore often lack understanding of cultural nuances in the Global South. As a result, millions of AI users in that region can have their decision-making influenced by chatbots that don’t necessarily draw on sources pertaining to their own cultures.

International students in the UK who come from the Global South (a majority) are at an immediate disadvantage when using AI to help them solve problems and conduct research. If a student asks for advice on how to apply their learning to a workplace or professional context back home, for instance, chatbots may provide guidance rooted in Western assumptions about career structures, professional norms, and opportunities that may not reflect the reality they’ll return to.

The Solution: The Practitioner as “The Human in the Loop”

There is broad consensus among international bodies that AI should be subject to human oversight to ensure its use is ethical and beneficial. UNESCO’s Recommendation on the Ethics of AI stresses that member states should ensure AI systems do not displace ultimate human responsibility and accountability, and positions educators as central to ensuring safe and ethical practice.

Students are already using AI in a variety of ways, including for career guidance, but the importance of human interaction remains paramount. Chatbots lack the lived experience that career guidance practitioners possess — experience that contributes meaningfully to conversations and challenges assumptions. For example, they are trained to reflect on how their own background, assumptions, and unconscious biases might shape the guidance they give — something no AI is currently equipped to do. Many young people are still developing their perspectives and may not yet have had the opportunity to learn from a wide range of viewpoints, which is ultimately how people craft their own ideas and opinions.

One approach that illustrates this well is rooted in Constructivist career theory, which positions the practitioner as someone who helps clients construct meaning through guided questioning and deliberate challenge rather than providing ready-made answers. Building on this, Social Cognitive Career Theory (Lent, Brown, & Hackett) sits within that broader constructivist tradition and recognises that people’s career beliefs are shaped by their learning experiences and the feedback they receive. When that feedback is consistently positive and unchallenging, as it tends to be from AI, clients can develop poorly-calibrated self-efficacy beliefs, overestimating their readiness or the quality of their ideas.

A skilled career coach uses professional intuition to recognise when a student needs to be pushed outside their comfort zone rather than reassured, providing the kind of honest, accurate feedback that builds realistic confidence. That productive discomfort is where real development happens, and it’s something no chatbot can yet replicate without meaningful human guidance.

This is where career guidance professionals can demonstrate their continued value. Career coaches can work with learners on the realities of application processes whilst creating structured opportunities to develop the communication and critical thinking skills that employers consistently say are missing.

Employers in Glasgow have told me directly that many young candidates have the skills they need on paper but lack the confidence and communication ability to express their abilities and succeed in practice. Young people need the opportunity to develop these through honest conversations with real people who can draw on their own experience, offer a different perspective, and connect them to others who have navigated similar challenges. The art of successful communication is about synthesising different perspectives to form your own informed view, and that is something a career guidance practitioner is uniquely placed to model and teach.

Practical Tip: Encourage students to think of at least two counterarguments to advice they receive from AI chatbots before accepting it. For more practical strategies, explore our free AI for Busy Global Educators 7-day course.

Conclusion: Interrogating the Echo Chamber

AI generates responses by drawing on past conversations and patterns in its training data. It can often be sycophantic and won’t always challenge ideas or introduce perspectives beyond what a user has already presented. This “yes-man” behaviour is frequently a reflection of the user’s own input; without the AI literacy to explicitly prompt for dissent or counterarguments, users can inadvertently turn a powerful research tool into a mere mirror of their own assumptions.

A career coach’s job is to provide constructive friction — to help people’s ideas grow. For educators and career practitioners, this may mean teaching students not only how to use AI tools but how to prompt for challenge, asking for alternative viewpoints, counterarguments, and evidence rather than accepting the first response they receive.

In practice, this doesn’t need to be complicated. Try building these small habits into existing tasks:

- Share an AI response with a classmate and ask them to poke holes in it — what has it missed, what has it assumed, what would someone disagreeing with this say?

- Ask students to find one source that contradicts what the AI told them, or to write a short paragraph arguing the opposite view

- Get into the habit of asking the AI itself: “what’s the argument against this?” — even that simple question can open up a more honest conversation

These aren’t radical changes, they’re small habits that shift students from taking AI responses at face value to actually thinking them through. And that, ultimately, is what good learning has always looked like, with or without AI in the room.

Ultimately, knowledge and beliefs should always be formed through careful consideration of multiple ideas and perspectives, drawing on the learning and experiences that shape our understanding of the world. AI cannot do this because it has never experienced the world firsthand. Unlike Bilbo, who ultimately found the wisdom to let the ring go, AI will keep telling you to hold on to it. The best decisions we make are the ones informed by honest conversations, difficult questions, and the kind of hard-won perspective that no chatbot can manufacture.

Thom has over a decade of experience in international education, primarily in Asia-Pacific, with a background in programme development, management, and student support. He is passionate about helping to develop the skills of students, graduates, and fellow educators, and have delivered workshops on using AI ethically and practically in higher education, and on using it to enhance English language courses. His recent MA in Career Development highlighted the lack of careers support available to many international students in the region, and believes this is a gap that AI can help to bridge.